Canary deployment strategy with Openshift Service Mesh

In the previous section, we executed a canary deployment using Argo Rollouts without traffic management. When we set a 10% weight it does not mean that 10% of the traffic goes to the new version. Because, as we explained before, Argo Rollouts makes a best-effort attempt to achieve the percentage listed in the weight between the new and old versions.

To achieve the right percentage in each version, we are going to use traffic management with Openshift Service Mesh

Generate film-storage service mesh GitOps Application

First of all, it is required to review a set of objects in our forked git repository to configure the deployment correctly for deploying our application following a GitOps model.

For this exercise, we are going to use Helm to render the different Kubernetes objects. Please follow the next section to understand the required objects and configurations.

Review and Modify Rollout strategy

We want you to define the steps to perform the canary deployment. You can choose the number of steps and the percentage of traffic. To learn more about Argo Rollouts, please read this.

Please edit the file named ./rhsm-canary/templates/film-storage-back-rollout.yaml to define <SET-STEPS> as you want and understand the canary deployment strategy:

strategy:

canary:

dynamicStableScale: true

analysis:

templates:

- templateName: {{ include "service.name" . }}-analysis-template

trafficRouting:

istio:

virtualService:

routes:

- primary

name: {{ include "service.name" . }}

destinationRule:

name: {{ include "service.name" . }}

canarySubsetName: canary

stableSubsetName: stable

steps:

<SET-STEPS>Modify Helm values

It is required to edit the file named ./rhsm-canary/edit.values.yaml to add the required <OCP_DOMAIN>. You can check their values in the parameters chapter.

service:

image:

version: 0.2

domain: <OCP_DOMAIN>Deploy film-storage Application

We are going to create the application film-storage-service-mesh-canary, which we will use to test canary deployment with Service Mesh. Because we will make changes in the application’s GitHub repository, we have to use the repository that you have just forked. Please edit the following YAML and set your own GitHub repository in the repoURL.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: film-storage-service-mesh-canary

namespace: %USER%-gitops-argocd

spec:

destination:

name: ''

namespace: %USER%-canary-service-mesh

server: 'https://kubernetes.default.svc'

source:

path: rhsm-canary

repoURL: https://github.com/change_me/workshop-argo-rollouts-resources

targetRevision: HEAD

helm:

valueFiles:

- edit.values.yaml

project: default

syncPolicy:

automated:

prune: false

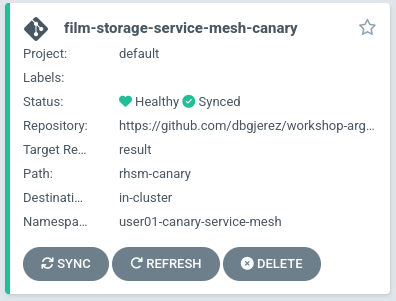

selfHeal: trueoc apply -f argocd/film-storage-service-mesh-canary.yamlLooking at the Argo CD dashboard, you would notice that we have a new film-storage-service-mesh-canary application.

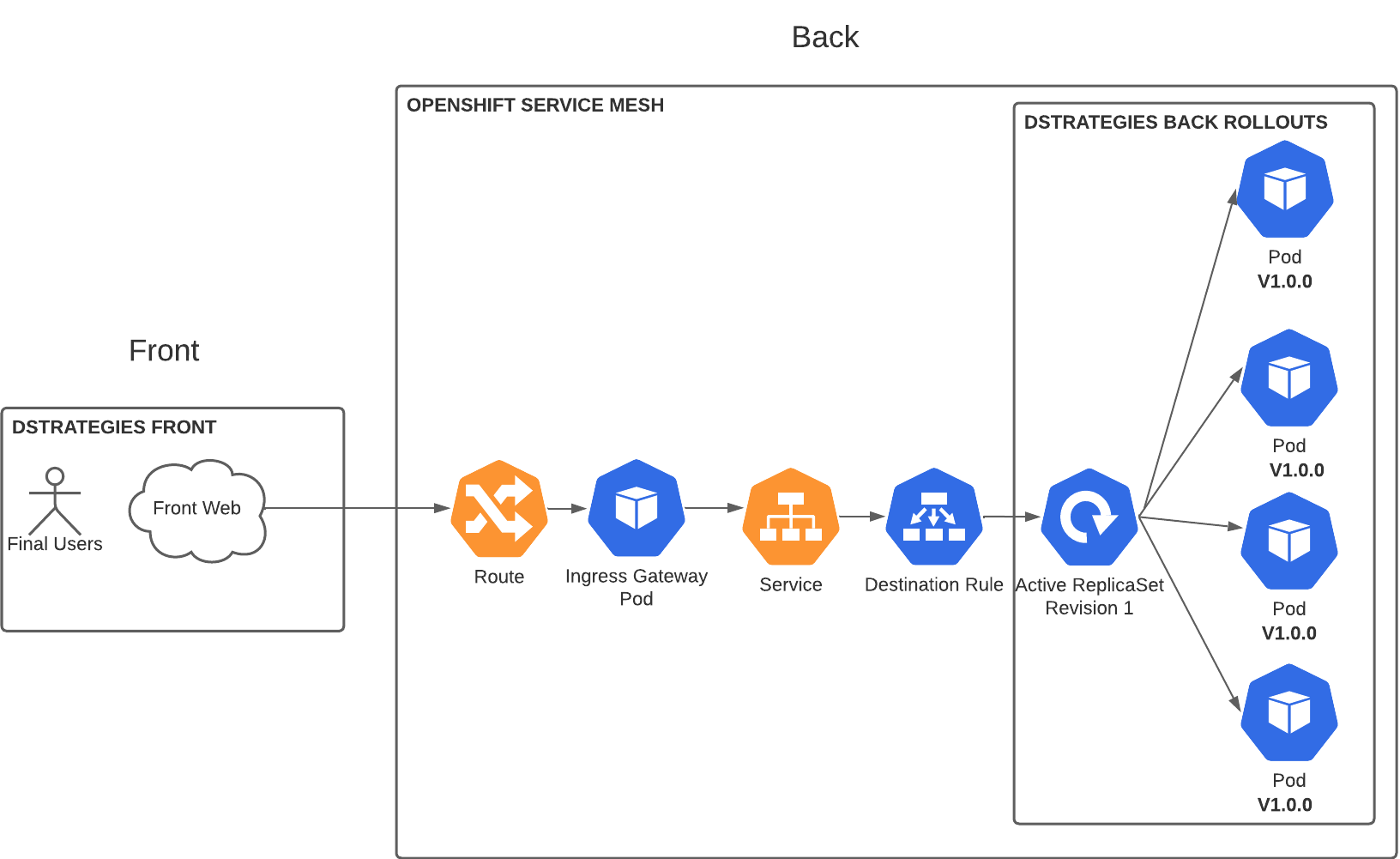

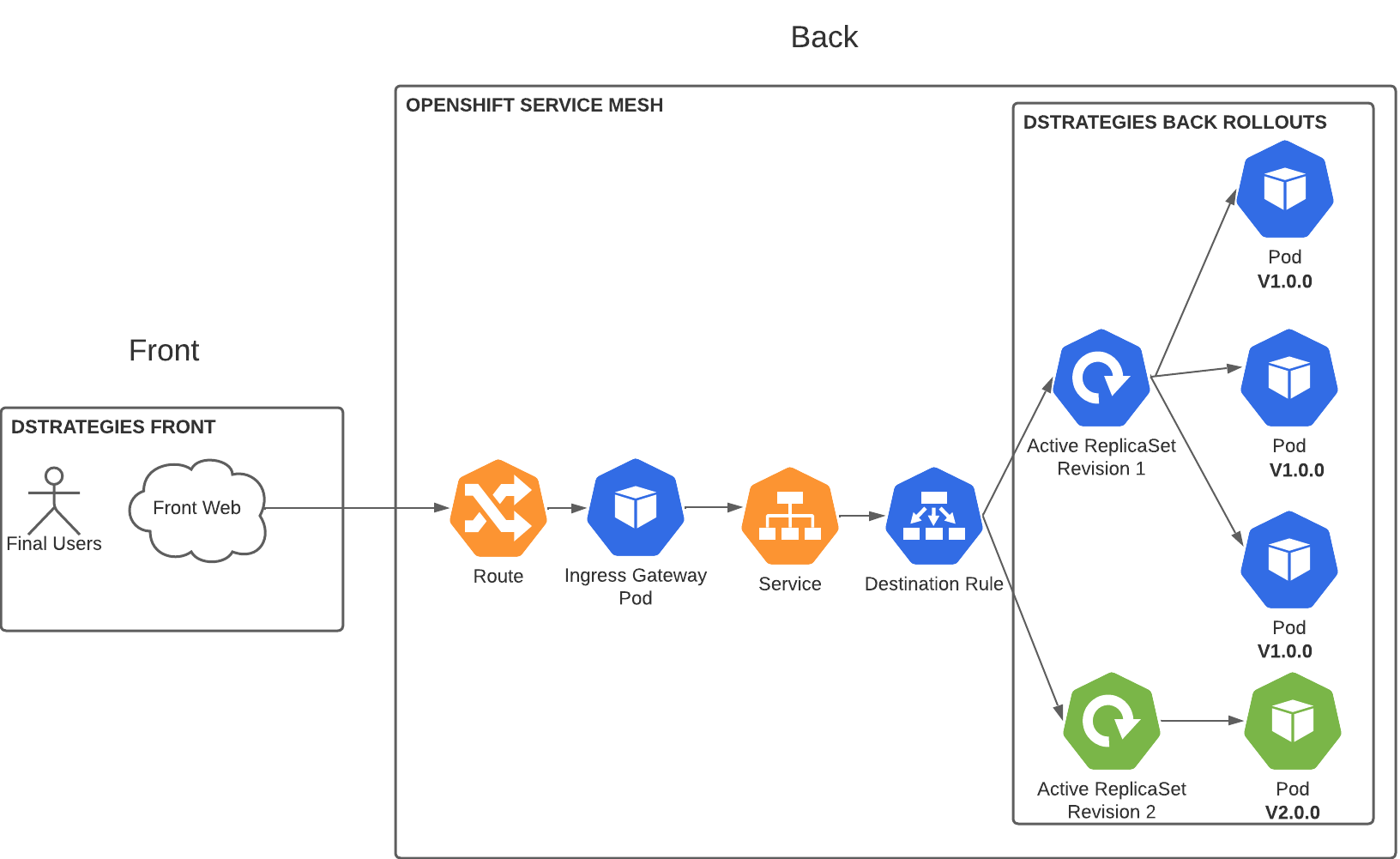

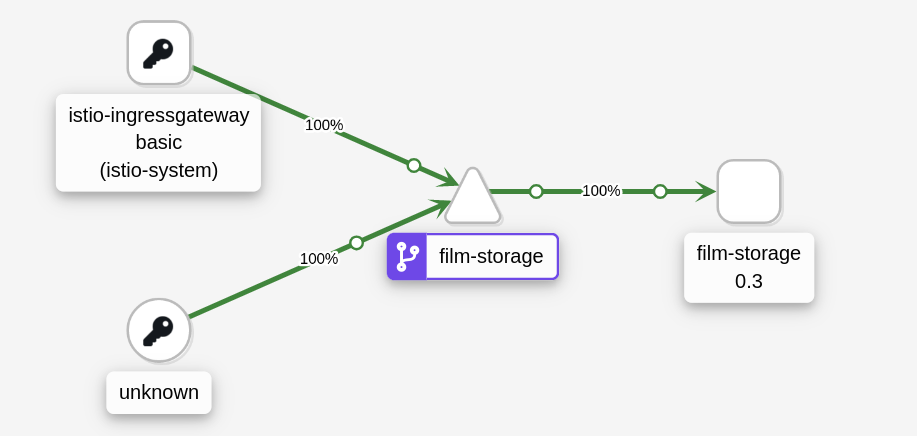

Application architecture

To achieve canary deployment with Cloud Native applications using Argo Rollouts and Openshift Service Mesh, we have designed this architecture.

OpenShift Components

-

Only one route and service that maps to the Service Mesh Destination Rule.

-

A Destination Rule exposing the pods.

We can also see the rollout’s status.

oc argo rollouts get rollout film-storage --watch -n %USER%-canary-service-meshNAME KIND STATUS AGE INFO

⟳ film-storage Rollout ✔ Healthy 21m

└──# revision:1

└──⧉ film-storage-7587cfcc54 ReplicaSet ✔ Healthy 21m stable

├──□ film-storage-7587cfcc54-7xdgl Pod ✔ Running 21m ready:2/2

├──□ film-storage-7587cfcc54-9vczq Pod ✔ Running 21m ready:2/2

├──□ film-storage-7587cfcc54-c8fgb Pod ✔ Running 21m ready:2/2

├──□ film-storage-7587cfcc54-f6m27 Pod ✔ Running 21m ready:2/2

└──□ film-storage-7587cfcc54-lkrgt Pod ✔ Running 21m ready:2/2We have defined an active service film-storage. When a new version is deployed, Argo Rollouts creates a new revision (ReplicaSet). The number of replicas in the new release increases based on the information in the steps, and the number of replicas in the old release decreases by the same number. But in this case, the amount of traffic that is sent to each version will be managed by the VirtualService and DestinationRule.

Test film-storage application

We have deployed the film-storage with ArgoCD. We can test that it is up and running.

Visit the back route via your web browser URL

Another possibility is to set the terminal making requests to the service:

watch curl -s https://film-storage-%USER%-canary-service-mesh-istio-system.apps.%CLUSTER%/api/v1/info

{"app":{"name":"film-storage","version":"0.2","buildTime":"2023/01/10 15:17:57"}}film-storage canary deployment

To test canary deployment we are going to do changes in the application.

We will deploy a new version 0.3.

Argo Rollouts will automatically deploy a new film-storage revision. Based on the steps that you have defined, we will see how the traffic will go to the different versions.

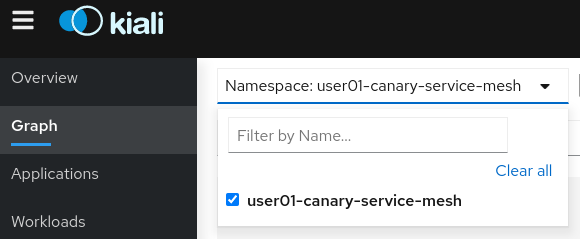

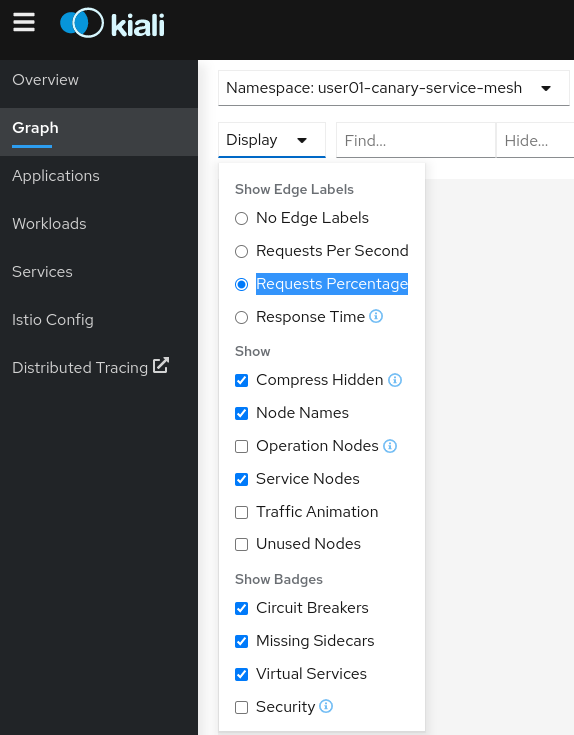

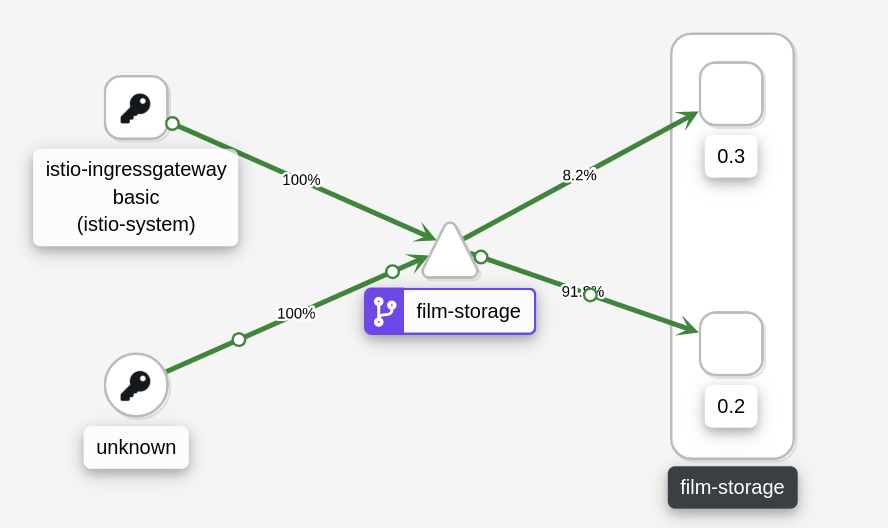

We also encourage you to use Kiali graph to see the percentages of the traffic that goes to each version. Log in with your Openshift lab-user and select your namespace.

Then go to Display, Show Edge Labels and select Requests Percentage.

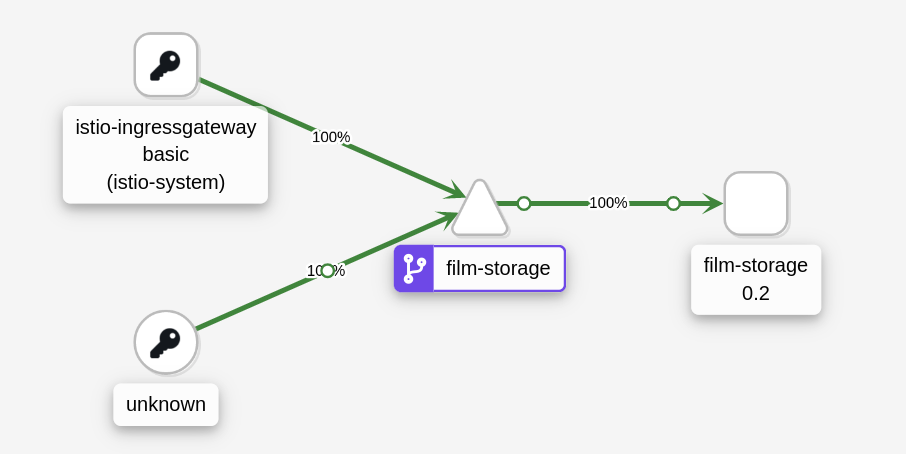

We will see the graph with 100% of the traffic going to 0.2 version.

We have to edit the files ./rhsm-canary/edit.values.yaml to modify the value 0.2 to 0.3:

version: 0.2 <---When you are ready to see all the changes on the fly. Push the changes to start the deployment.

git add .

git commit -m "Change version to 0.3"

git pushArgoCD will refresh the status after some minutes. If you don’t want to wait you can refresh it manually from ArgoCD UI.

This is our current status (It can be different depending on the steps you have defined):

oc argo rollouts get rollout film-storage --watch -n %USER%-canary-service-meshNAME KIND STATUS AGE INFO

⟳ film-storage Rollout ॥ Paused 67m

├──# revision:2

│ ├──⧉ film-storage-68c88f96cd ReplicaSet ✔ Healthy 19s canary

│ │ └──□ film-storage-68c88f96cd-sxvxz Pod ✔ Running 18s ready:2/2

│ ├──α film-storage-68c88f96cd-2.1 AnalysisRun ✔ Successful 18s ✔ 1

│ └──α film-storage-68c88f96cd-2 AnalysisRun ✔ Successful 18s ✔ 1

└──# revision:1

└──⧉ film-storage-7587cfcc54 ReplicaSet ✔ Healthy 67m stable

├──□ film-storage-7587cfcc54-7xdgl Pod ✔ Running 67m ready:2/2

├──□ film-storage-7587cfcc54-9vczq Pod ✔ Running 67m ready:2/2

├──□ film-storage-7587cfcc54-c8fgb Pod ✔ Running 67m ready:2/2

├──□ film-storage-7587cfcc54-f6m27 Pod ✔ Running 67m ready:2/2

└──□ film-storage-7587cfcc54-lkrgt Pod ✔ Running 67m ready:2/2In Kiali, you can also see how the traffic going to each version changes.

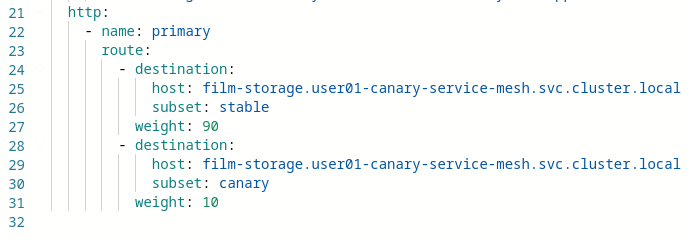

Another important point is how ArgoCD change the percentage of traffic automatically in the virtual service instance:

Deploy film-storage new version rollout finished

When all the rollout steps have finished. We have the new version 0.3 for all the users!!!

In addition, Argo Rollouts will scale down the old version:

oc argo rollouts get rollout film-storage --watch -n %USER%-canary-service-meshNAME KIND STATUS AGE INFO

⟳ film-storage Rollout ✔ Healthy 74m

├──# revision:2

│ ├──⧉ film-storage-68c88f96cd ReplicaSet ✔ Healthy 7m35s stable

│ │ ├──□ film-storage-68c88f96cd-sxvxz Pod ✔ Running 7m34s ready:2/2

│ │ ├──□ film-storage-68c88f96cd-bnbfb Pod ✔ Running 2m27s ready:2/2

│ │ ├──□ film-storage-68c88f96cd-g49j2 Pod ✔ Running 2m27s ready:2/2

│ │ ├──□ film-storage-68c88f96cd-llzx5 Pod ✔ Running 2m27s ready:2/2

│ │ └──□ film-storage-68c88f96cd-m6wnv Pod ✔ Running 2m27s ready:2/2

│ ├──α film-storage-68c88f96cd-2.1 AnalysisRun ✔ Successful 7m34s ✔ 1

│ └──α film-storage-68c88f96cd-2 AnalysisRun ✔ Successful 7m34s ✔ 1

└──# revision:1

└──⧉ film-storage-7587cfcc54 ReplicaSet • ScaledDown 74m